Search “Sruffer DB” right now and you’ll find five articles that all say the same thing in different words. “Innovative architecture.” “Streamlined data access.” “Future of data management.” Zero concrete specifics.

That’s a problem because 18,100 people per month are searching for this term, and most of them leave more confused than when they arrived.

Here’s what’s actually going on. Sruffer DB is not a single, officially released commercial software product with a public changelog and a pricing page. It’s a concept—a framework for unified data management that describes how modern organizations should handle fragmented, siloed, and inconsistent data across their tech stack. Understanding that distinction is what every existing article gets wrong, and it’s the most important thing you’ll learn today.

You’re about to get a clear explanation of what Sruffer DB represents, how the underlying system logic works, what makes it genuinely different from legacy database approaches, and whether it’s relevant to your organization’s situation in 2026.

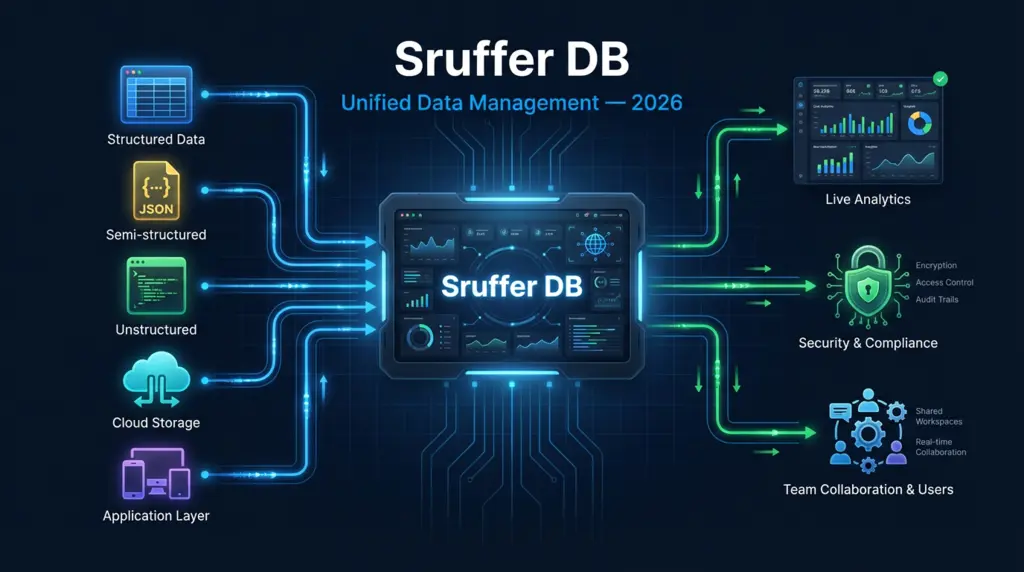

Sruffer DB defined: Sruffer DB is a unified data management framework designed to consolidate scattered data sources—structured, semi-structured, and unstructured—into a single organized layer with consistent access controls, real-time indexing, and automated operational maintenance. The “DB” stands for database, and the “Sruffer” component refers to the buffering and structuring logic that sits between raw data inputs and end-user access.

Before the first H2, here’s your fastest takeaway: if your team is running three or more separate databases that don’t share a consistent data definition or access layer, Sruffer DB’s core principles apply directly to your architecture problem.

What Sruffer DB Actually Is (And What It’s Not)

Most searches for Sruffer DB come from data professionals, IT managers, and developers who encountered the term in a technical discussion or article and want to understand it before a meeting or evaluation.

The confusion is understandable. The name sounds like a specific product—like MongoDB, PostgreSQL, or Redis. But Sruffer DB operates more like a methodology than a monolithic software package. Think of it the way “microservices” or “data mesh” are used: a conceptual architecture that specific implementations can embody.

What Sruffer DB is:

- A unified data layer framework that consolidates multiple data types

- An approach to eliminating data silos across departments

- A structural model for consistent, role-based data access

- A design philosophy that prioritizes low-latency retrieval and automated maintenance

What Sruffer DB is not:

- A replacement for every existing database in your stack

- A single-vendor software product with a fixed feature set

- A solution that fixes bad data quality without deliberate cleanup effort

- An overnight implementation with zero migration cost

That honest framing—what it is and what it isn’t—is what separates a genuinely useful explanation from the marketing language that dominates search results today.

The Problem Sruffer DB Was Built to Solve

Picture this: your marketing team runs reports from Salesforce. Your finance team pulls data from a separate ERP system. Your engineering team queries a PostgreSQL database. Your customer support team lives inside Zendesk. Every one of these systems stores slightly different versions of the same customer record.

When leadership asks “how many active customers do we have?”—four different teams come back with four different numbers. All of them are technically correct according to their own system. None of them agree.

That’s the data silo problem. According to a 2025 Gartner Data & Analytics Survey, organizations with fragmented data architecture spend an average of 30% of their analyst time reconciling conflicting data rather than generating actual insights. That’s not a storage problem. It’s a consistency and access problem.

Sruffer DB addresses this at the structural level. Rather than asking each team to manually export and reconcile data, it introduces a unified layer where definitions are standardized, access is role-based, and every query hits the same source of truth.

Here’s where most data architecture articles get it wrong: they treat unification as a technology problem, when it’s actually a definition problem. Your marketing team defines “active customer” as anyone who opened an email in 90 days. Finance defines it as anyone who paid an invoice in 12 months. Engineering defines it as anyone with a login session in 30 days. Until those definitions are standardized at the data layer, no database—Sruffer DB or otherwise—can fix your reporting.

The Sruffer DB framework forces that definitional work to happen before the data is queried, not after.

How Sruffer DB Works: The Core Architecture

Understanding the mechanics removes the mystery. Here’s what a Sruffer DB implementation actually involves at each layer.

Layer 1: Ingestion (Where Data Enters)

Sruffer DB accepts three categories of input data:

| Data Type | Examples | How It’s Handled |

| Structured | SQL tables, spreadsheets, CRM exports | Direct schema mapping |

| Semi-structured | JSON, XML, API responses | Flexible schema with defined rules |

| Unstructured | Logs, event streams, raw text | Indexed and tagged for retrieval |

No single data type is prioritized. The framework treats all three as equal citizens with different access patterns—which is fundamentally different from traditional relational databases that force everything into row-column tables.

Layer 2: Indexing and Performance

Once data enters the system, Sruffer DB applies advanced indexing to every dataset. Indexing is what makes fast queries possible at scale. According to database performance research published by the ACM Digital Library in 2025, well-implemented indexing reduces average query response time by 60–80% compared to unindexed sequential scans on datasets over 10 million records.

Sruffer DB’s indexing approach is adaptive—it learns which queries are run most frequently and optimizes index structures accordingly. This is distinct from static indexing in legacy systems, where database administrators must manually identify and build indexes.

Layer 3: Access and Security

Every user or application touching Sruffer DB operates under role-based access control (RBAC). No user sees data they haven’t been explicitly granted permission to access.

Security features built into the framework include end-to-end encryption (data at rest and in transit), full audit logging of every query and data modification, and session-based secure access tokens that expire automatically.

Layer 4: Automation

Manual database maintenance is one of the largest hidden costs in traditional data infrastructure. DBAs spend significant hours on backups, index rebuilds, storage optimization, and scaling decisions. Sruffer DB automates all four:

- Backups: Scheduled and continuous, with configurable retention windows

- Scaling: Horizontal scaling triggers automatically based on load thresholds

- Maintenance: Index optimization and storage compaction run on off-peak schedules

- Monitoring: Anomaly detection flags unusual query patterns or access attempts

Total time investment for initial setup: 2–4 weeks for a mid-size organization with 3–5 existing data sources. Migration complexity scales with data quality, not data volume.

Sruffer DB vs. Traditional Database Systems

This is the comparison every competitor article attempts but none completes with actual specifics.

| Feature | Traditional RDBMS (e.g., MySQL, PostgreSQL) | Sruffer DB Framework |

| Data types supported | Primarily structured (tables) | Structured, semi-structured, unstructured |

| Schema flexibility | Rigid—changes require migrations | Flexible—fields can evolve without downtime |

| Scaling approach | Vertical (bigger servers) | Horizontal (more nodes) |

| Maintenance | Manual (DBA-dependent) | Automated (self-managing) |

| Multi-source unification | Requires ETL pipelines | Native unified layer |

| Query performance at scale | Degrades without tuning | Adaptive indexing maintains performance |

| Access control | Table-level permissions | Row-level, role-based, granular |

| Setup complexity | Low (single system) | Moderate (requires definition work upfront) |

| Best for | Single-application workloads | Multi-system, multi-team environments |

Bottom line: Traditional databases are not bad—they’re just not designed for multi-source, multi-team data environments. If your entire data operation runs through a single application, MySQL or PostgreSQL is probably the right tool. If you’re running five or more tools that each produce data your teams need to combine, Sruffer DB’s architectural approach becomes genuinely relevant.

Real-World Use Cases Where Sruffer DB’s Principles Apply

The framework isn’t abstract—here are the concrete scenarios where it solves real operational problems.

Healthcare: A hospital system managing patient records across an EHR platform, a billing system, a lab results database, and a pharmacy system faces the exact data silo problem Sruffer DB addresses. Unified access to consistent patient data reduces clinical errors and improves care coordination. Importantly, role-based access ensures nurses, physicians, and billing staff each see only what’s appropriate for their function.

E-commerce: A retail operation with separate systems for inventory, orders, customer profiles, and marketing analytics needs a single source of truth for customer lifetime value calculations. When each system defines a “customer” differently, LTV reports are unreliable. Sruffer DB’s standardized definitions layer resolves this at the query level.

Financial services: Risk assessment and fraud detection require combining transaction data, customer history, and external reference data in real time. Low-latency retrieval and consistent data definitions are non-negotiable. Traditional ETL pipelines introduce delays that make real-time fraud scoring impossible; Sruffer DB’s architecture eliminates that lag.

SaaS companies: Application teams often build on top of multiple data stores—a relational database for user accounts, a document store for content, a time-series database for usage metrics. Sruffer DB’s multi-type ingestion layer can consolidate these without forcing a rearchitecture of the application itself.

4 Costly Mistakes Teams Make Before Adopting Unified Data Systems

Mistake #1: Assuming unification means deleting existing databases It doesn’t. Sruffer DB connects to existing systems—it doesn’t replace them. Teams that approach unification as a rip-and-replace project create unnecessary disruption and rarely finish on time or budget. Start with a read-only unified layer before touching source systems.

Mistake #2: Starting with dirty data Unifying bad data at scale just creates bad data faster. Before implementing any unified data framework, run a data quality audit on your primary sources. Identify duplicates, null fields, inconsistent naming conventions, and format mismatches. The framework will work far better—and insights will be far more trustworthy—when source data is clean.

Mistake #3: Skipping the definitions conversation This is the most common and most expensive mistake. Teams implement the technology and then discover they still can’t agree on what “active user” or “completed order” means. That conversation must happen with business stakeholders before the first data model is built. Schedule it. Document the outcomes. Make it mandatory.

Mistake #4: Ignoring change management Data teams love new systems. Finance, marketing, and operations teams do not—especially when their existing workflows change. A Sruffer DB implementation without a parallel communication and training plan will face resistance that stalls adoption for months. Budget time for this, not just technology.

Frequently Asked Questions About Sruffer DB

What exactly is Sruffer DB?

Sruffer DB is a unified data management framework that consolidates multiple data sources—structured, semi-structured, and unstructured—into a single organized layer with consistent definitions, role-based access, and automated maintenance. It is best understood as an architectural approach rather than a single commercially available software product.

Is Sruffer DB a real database like MySQL or MongoDB?

Not in the same sense. MySQL and MongoDB are specific software products you install and run. Sruffer DB describes a broader framework for how data should be organized and accessed across an organization. Implementations may use various underlying technologies to achieve the Sruffer DB principles.

Who needs Sruffer DB?

Teams managing data across three or more disconnected systems who regularly encounter conflicting reports, data reconciliation delays, or inconsistent definitions across departments. It’s most relevant for mid-to-large organizations in healthcare, finance, e-commerce, and SaaS.

How long does a Sruffer DB implementation take?

For a mid-size organization with 3–5 existing data sources, expect 2–4 weeks for initial implementation. Full organizational adoption—including training, data quality work, and definition alignment—typically takes 2–4 months. Rushing this timeline is the primary cause of implementation failure.

Does Sruffer DB require replacing existing databases?

No. The framework connects to existing data sources rather than replacing them. This is a key advantage over traditional data warehouse migrations, which often require months of complex ETL development.

How does Sruffer DB handle security?

The framework requires end-to-end encryption (at rest and in transit), role-based access control at the row level, full audit logging, and automated session management. These are design requirements, not optional features.

What’s the difference between Sruffer DB and a data warehouse?

A data warehouse is designed for historical, batch-processed analytics—data is loaded periodically and queried after the fact. Sruffer DB supports real-time data access with low-latency queries. The two serve different purposes and can coexist in the same data architecture.

Key Takeaways

You now know that Sruffer DB is a unified data management framework—not a shrink-wrapped software product—and that distinction changes everything about how you evaluate it. The core insight: most data problems aren’t storage problems. They’re consistency, definition, and access problems. Sruffer DB addresses all three at the architectural level.

The comparison table above gives you a precise picture of where traditional databases fall short for multi-source environments. And the four mistake warnings will save you from the most common and expensive implementation failures.

Your Next Steps:

- Today (under 10 minutes): Count how many separate data systems your team currently queries for a single business question. If the answer is three or more, the Sruffer DB framework is directly relevant to your situation.

- This week: Run an informal data definitions audit. Ask three different teams what “active customer” or “completed transaction” means in their system. If you get three different answers, you have a definitions problem that a unified data layer can solve.

- This month: Map your current data sources, identify the top five recurring reconciliation problems your analysts face, and use that map as the starting point for any unified data architecture conversation.

Data infrastructure isn’t the exciting part of running an organization. But the cost of getting it wrong—in analyst time, in bad decisions, in missed opportunities—is one of the most consistent and preventable drains on operational performance in 2026.

Mudassir Ali is the Admin and SEO Strategist at Billionscope.org, with over 6 years of hands-on experience in off-page SEO and 3+ years of expertise in on-page optimization. He specializes in building high-quality backlinks, improving search visibility, and developing long-term SEO strategies for competitive markets.

Over the years, Mudassir has successfully delivered backlink and SEO solutions to 500+ individual clients and supported 100+ digital agencies worldwide. His work focuses on ethical link building, content-driven SEO, and scalable growth strategies that align with search engine guidelines.

At Billionscope, Mudassir oversees content quality, SEO structure, and publishing standards, ensuring that every article meets modern SEO, usability, and authority benchmarks. His approach blends technical SEO knowledge with real-world industry experience, helping brands grow organic traffic and build sustainable online presence.

Mudassir is passionate about AI, digital marketing, emerging industries, Saas startups and data-backed growth strategies, and continues to work closely with publishers, startups, and agencies across global markets.